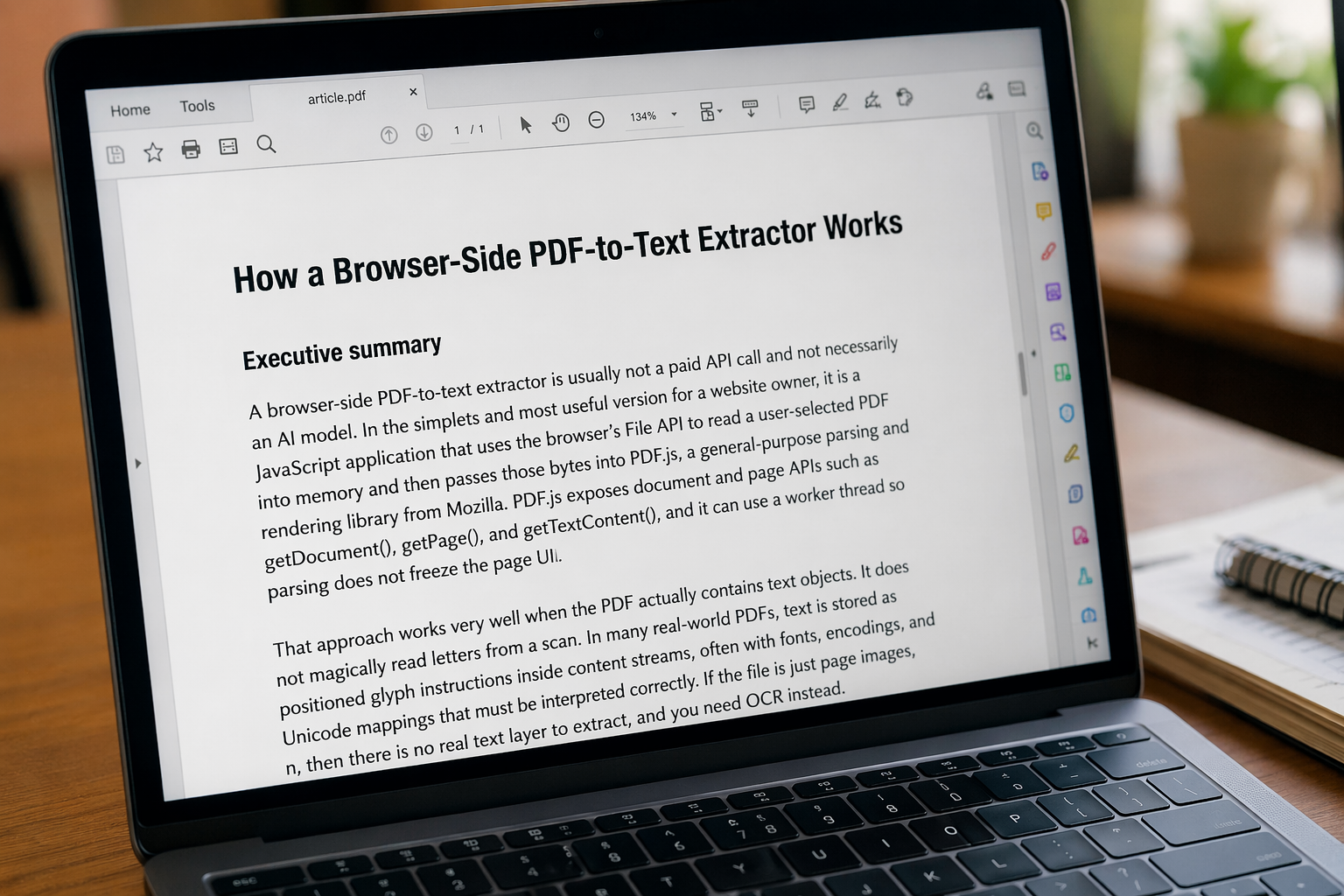

How a Browser-Side PDF-to-Text Extractor Works

Executive summary

A browser-side PDF-to-text extractor is usually not a paid API call and not necessarily an AI model. In the simplest and most useful version for a website owner, it is a JavaScript application that uses the browser’s file tools to read a user-selected PDF into memory and then passes those bytes into PDF.js, a general-purpose parsing and rendering library from Mozilla.

PDF.js exposes document and page functions such as getDocument(), getPage(), and getTextContent().

It can also use a worker thread so PDF parsing does not freeze the page interface.

This approach works very well when the PDF actually contains text objects. It does not magically read letters from a scan. In many real-world PDFs, text is stored as positioned glyph instructions inside content streams, often with fonts, encodings, and Unicode mappings that must be interpreted correctly. If the file is just page images, then there is no real text layer to extract, and OCR is needed instead.

For OCR, the most practical browser options today are libraries such as Tesseract.js and transformer-based OCR running through Hugging Face Transformers.js. Tesseract.js can run in the browser and supports many languages, but it does not read PDF files directly; it expects images. Transformer OCR can also run in the browser, usually through ONNX Runtime on CPU, WebAssembly, or WebGPU, but browser-ready OCR models are much heavier to download than PDF.js and are usually more complex to integrate.

For a traffic-focused WordPress tool, the best first build is usually simple: use a shortcode, load PDF.js, add a clear privacy notice, handle errors well, and surround the tool with useful explanatory content written for people. For indexing, make sure the page is published, not marked noindex, internally linked, and submitted in Google Search Console if needed.

A normal PDF often already contains real text. PDF.js reads that existing text from the PDF structure. OCR is only needed when the PDF is scanned or image-based.

A beginner-friendly introduction

Imagine the tool from the visitor’s point of view. They open your page. Their browser downloads the page HTML, CSS, and JavaScript. Then they choose a PDF from their own device using a normal file picker. At that point, JavaScript receives a file object representing the selected PDF.

The browser can then read that file locally with tools such as FileReader or arrayBuffer().

The important privacy point is this: the browser only gets access to files the user explicitly selected.

A website cannot randomly read files from a visitor’s computer.

That is why a browser-side tool feels more private than a server-upload tool. The file can be processed entirely on the user’s device if the code simply reads the selected file and keeps the bytes in browser memory.

1. Server-side upload tool: the PDF is sent to your server and extraction happens there.

2. Browser-side tool: the PDF stays in the browser memory and extraction happens on the user’s device.

For a free, no-API-bill website utility, the browser-side model is usually the better fit. It reduces hosting costs for the site owner and gives the visitor a quick tool experience. The trade-off is that performance depends on the visitor’s browser and device, not your server.

The browser-side PDF extraction flow

↓

Browser creates a file object

↓

JavaScript reads the file as binary data

↓

PDF.js opens the PDF data

↓

PDF.js reads each page

↓

The tool asks each page for text content

↓

Text items are joined together

↓

Extracted text appears in the output box

What a PDF really contains

A PDF is not simply a text file with pages. It is a structured document format made of objects. A PDF may contain pages, fonts, images, streams, dictionaries, text operators, metadata, and other internal pieces.

A normal PDF page may contain instructions such as:

Use this font.

Place the word "Autism" at position x=100, y=700.

Place the word "therapy" at position x=160, y=700.

Place the word "helps" at position x=230, y=700.To the user, the PDF looks like a normal page. But inside the file, it may contain many instructions about fonts, positions, pages, images, and text. A PDF parser can read those instructions and collect the text.

A very small conceptual PDF example

%PDF-1.2

1 0 obj

<< /Type /Page /Parent 5 0 R /Resources 3 0 R /Contents 2 0 R >>

endobj

2 0 obj

<< /Length 51 >>

stream

BT

/F1 24 Tf

1 0 0 1 260 254 Tm

(Hello World) Tj

ET

endstream

endobj

This example is simplified, but it shows the idea.

A page object can point to a content stream, and the content stream can contain text operators.

Operators such as BT, ET, Tf, Tm, and Tj help describe where and how text should be drawn.

Why extracted PDF text can look strange

PDF text is often stored as visual drawing instructions, not as clean paragraphs. A PDF can place each word, text chunk, or even each glyph independently. The rendered page may look perfect, but the internal text order may be fragmented.

That is why extracted text can sometimes have:

- unusual spacing,

- line breaks in strange places,

- columns mixed together,

- table text in the wrong order,

- headers and footers mixed into the main text,

- symbols or characters that do not copy correctly.

This does not always mean the tool is broken. It often means the PDF itself stores the content as positioned fragments instead of clean article-style text.

Fonts, encodings, and hidden text layers

Fonts and encoding make PDF text extraction even more interesting. A PDF may store glyph identifiers rather than simple Unicode characters. To copy or search the text correctly, software may need to map those glyphs back to Unicode characters.

This is why some PDFs display correctly but extract badly. The viewer can draw the page, but the text-to-Unicode mapping may be missing, unusual, or incomplete.

Another important detail is invisible text. Some PDFs, especially scanned PDFs processed by OCR software, may contain an invisible text layer behind the visible page image. The user sees the scanned image, but the PDF also includes hidden selectable text.

One scanned PDF may contain a hidden OCR text layer. Another scanned PDF may contain only page images with no text layer at all.

How PDF.js and the browser do the work

PDF.js is structured in layers. The core layer parses and interprets the binary PDF. The display layer exposes APIs for loading documents, accessing pages, rendering pages, and extracting information. The viewer layer is what gives a full PDF viewer interface.

For a simple PDF-to-text tool, the developer normally uses the display layer.

The main entry point is getDocument().

The selected PDF file can be converted into an ArrayBuffer, then passed into PDF.js as data.

const file = fileInput.files[0];

const data = await file.arrayBuffer();

const pdf = await pdfjsLib.getDocument({ data }).promise;

for (let pageNumber = 1; pageNumber <= pdf.numPages; pageNumber++) {

const page = await pdf.getPage(pageNumber);

const textContent = await page.getTextContent();

const pageText = textContent.items

.map(item => item.str)

.join(" ");

}In simple words: the browser reads the selected file, PDF.js opens the PDF, each page is checked for text items, and each text item has a string value. The tool joins those strings and shows the extracted text to the user.

What getTextContent() returns

The getTextContent() function does not simply return one perfect paragraph.

It returns a text-content object with many text items.

Each item can contain information such as:

str— the actual text string,dir— text direction,transform— placement and transformation,widthandheight,fontName,hasEOL— whether a line break follows.

A simple tool may only use item.str and join the strings.

A more advanced tool can use position and line information to rebuild paragraphs more carefully.

That is why a basic extractor is easy to build, but a perfect layout-preserving extractor is much harder.

Why scanned PDFs need OCR

A scanned PDF is fundamentally different from a normal text PDF. A scanned PDF may contain only images of pages. Internally, it may be closer to this:

Page 1:

Show image scan001.jpgA human sees words in the image, but the PDF parser only sees an image. There are no text objects to extract. That is why a browser-side PDF-to-text extractor should show a clear message such as:

OCR means Optical Character Recognition. OCR is used when the computer must look at an image and recognize letters from shapes. That is a different problem from PDF text extraction.

Does this PDF already contain real text that I can decode?

OCR asks:

Can I look at this image and recognize the letters?

The main browser-side OCR options

Tesseract.js

Tesseract.js is a JavaScript OCR library that can run in the browser. It is useful for extracting text from images, screenshots, and rendered page images. It can support many languages, but it usually needs language data and more processing time than simple PDF text extraction.

Tesseract.js does not directly read PDF files as PDFs. If the input is a scanned PDF, the usual approach is:

- Open the PDF with PDF.js.

- Render each PDF page as an image or canvas.

- Send that image to the OCR engine.

- The OCR engine recognizes letters.

- The recognized text is shown to the user.

Transformers.js and TrOCR

A more advanced route is transformer-based OCR in the browser. Hugging Face Transformers.js can run models directly in the browser using ONNX Runtime, CPU, WebAssembly, or WebGPU. OCR models such as TrOCR can recognize text from images, but they are much heavier than PDF.js.

For a public website tool, browser transformer OCR can mean larger downloads, slower startup, more memory use, and more complicated user experience. That does not mean it is bad. It means it is more advanced and should usually come after the simple PDF.js version.

Comparison: PDF.js vs Tesseract.js vs Transformers.js OCR

| Attribute | PDF.js | Tesseract.js | Transformers.js + TrOCR |

|---|---|---|---|

| Runs in browser? | Yes | Yes | Yes |

| What it is | PDF parser, renderer, extractor | OCR engine in JavaScript/WebAssembly | Machine-learning runtime plus OCR model |

| Best for | Extracting existing text from PDFs | OCR from images or rendered PDF pages | AI OCR from images |

| PDF input support | Yes | Not directly; needs image input | Not direct PDF parsing; works on images |

| Speed | Usually fastest for selectable-text PDFs | Slower because it recognizes letters from images | Usually heaviest in browser |

| Complexity | Low to medium | Medium | Medium to high |

| Best first choice? | Yes, for normal PDFs | Good second step for scanned files | Advanced option later |

Combining PDF.js and OCR

If you want to support scanned PDFs later, the combined approach is:

- Open the PDF with PDF.js.

- Loop through the pages.

- Render each page to canvas.

- Turn the canvas into an image.

- Send the image to OCR.

- Collect the recognized text.

- Show the OCR result to the user.

Example OCR pipeline idea

// Example idea only

const pdfData = await file.arrayBuffer();

const pdf = await pdfjsLib.getDocument({ data: pdfData }).promise;

for (let pageNumber = 1; pageNumber <= pdf.numPages; pageNumber++) {

const page = await pdf.getPage(pageNumber);

const viewport = page.getViewport({ scale: 2 });

const canvas = document.createElement("canvas");

const context = canvas.getContext("2d");

canvas.width = Math.ceil(viewport.width);

canvas.height = Math.ceil(viewport.height);

await page.render({

canvasContext: context,

viewport

}).promise;

const imageBlob = await new Promise(resolve => canvas.toBlob(resolve, "image/png"));

// Then send imageBlob to an OCR engine such as Tesseract.js

}This is much heavier than simple text extraction because the browser must render pages as images and then run OCR on each image. For multi-page scanned PDFs, this can take time.

Building and shipping the tool on WordPress

A lightweight browser-side PDF extractor fits neatly into a custom WordPress plugin. The clean deployment pattern is:

- a shortcode outputs the tool interface,

- CSS styles the tool,

- JavaScript handles file selection and text extraction,

- PDF.js reads the PDF,

- copy and download buttons help the user reuse the result,

- a privacy note explains that the file is designed to be processed in the browser.

Sample WordPress plugin structure

ingo-pdf-to-text-extractor/

ingo-pdf-to-text-extractor.php

assets/

pdf-to-text.js

pdf-to-text.css

pdfjs/

pdf.min.js

pdf.worker.min.js

README.md

THIRD_PARTY_NOTICES.txtSample PHP shortcode structure

<?php

/**

* Plugin Name: INGOAMPT PDF to Text Extractor

* Description: Browser-side PDF text extraction using PDF.js.

* Version: 1.0.0

*/

if (!defined('ABSPATH')) {

exit;

}

function ingo_pdf_text_register_assets() {

wp_enqueue_style(

'ingo-pdf-text-style',

plugin_dir_url(__FILE__) . 'assets/pdf-to-text.css',

array(),

'1.0.0'

);

wp_enqueue_script(

'ingo-pdf-text-script',

plugin_dir_url(__FILE__) . 'assets/pdf-to-text.js',

array(),

'1.0.0',

true

);

}

add_action('wp_enqueue_scripts', 'ingo_pdf_text_register_assets');

function ingo_pdf_text_shortcode($atts = array(), $content = null) {

ob_start();

?>

<div class="ingo-pdf-text-tool">

<label for="ingo-pdf-file"><strong>Choose a PDF</strong></label>

<input type="file" id="ingo-pdf-file" accept=".pdf,application/pdf">

<div class="ingo-pdf-actions">

<button type="button" id="ingo-pdf-extract-btn">Extract text</button>

<button type="button" id="ingo-pdf-copy-btn">Copy text</button>

<button type="button" id="ingo-pdf-download-btn">Download .txt</button>

</div>

<p id="ingo-pdf-status" aria-live="polite"></p>

<textarea

id="ingo-pdf-output"

rows="18"

placeholder="Extracted text will appear here..."

></textarea>

</div>

<?php

return ob_get_clean();

}

add_shortcode('ingo_pdf_to_text', 'ingo_pdf_text_shortcode');

The shortcode should be shown safely in articles as:

[ingo_pdf_to_text].

Using escaped brackets prevents WordPress from trying to run the shortcode inside an explanatory article.

Privacy wording for a browser-side PDF tool

Privacy: This tool is designed to read the PDF you choose directly in your browser for text extraction.

The file is not intended to be uploaded to our server for extraction. Please avoid using highly confidential, sensitive, medical, legal, financial, or private documents in online tools.

If the website loads PDF.js from an external CDN, the browser needs to request that technical library file from the CDN. If the website owner hosts PDF.js locally inside the plugin, fewer third-party requests are needed. In both cases, the visitor still needs internet to open the website itself.

Why does the tool need internet if processing is local?

The extraction can happen locally, but the webpage still needs to load first. The user needs internet to open the website, load the page, load the CSS, load the JavaScript, and load any library files such as PDF.js.

After the tool is loaded, the selected PDF can be processed in the browser. The PDF itself does not need to be uploaded to a server for extraction in this design.

Getting the page indexed properly

A tool page should not be only a file input and a button. If you want the page to have a better chance in search engines, add useful explanatory content around it. Explain what the tool does, when it works, when it fails, and how the technology works.

A strong indexing setup for this type of page usually includes:

- a clear title such as Free PDF to Text Extractor,

- a meta description explaining what the page does,

- a useful introduction above or below the tool,

- FAQ content,

- internal links from related tools and articles,

- normal crawlable links using

<a href="">, - no

noindexsetting on the page, - submission through Google Search Console if needed.

Suggested SEO title

Free PDF to Text Extractor | Extract Text from PDF OnlineSuggested meta description

Extract text from PDF files directly in your browser. Free PDF to text tool for selectable PDFs. Scanned or image-based PDFs may require OCR.Questions and answers

What exactly is doing the extraction if there is no AI model?

PDF.js is doing the extraction. It reads the internal structure of the PDF and collects text items that already exist inside the file. This is document parsing, not artificial intelligence.

Is this a free API?

No. In the browser-side PDF.js approach, there is no paid extraction API. The browser downloads JavaScript code and runs it on the user’s device. The tool uses library functions, not a per-call cloud service.

Is the selected PDF uploaded to the website server?

Not in this browser-side design. A file input can be used either for local processing or for upload, depending on how a site is programmed. In this tool, the goal is to read the file locally in the browser and extract text there.

Why does one PDF work and another PDF fail?

One PDF may contain real selectable text, while another may contain only images of pages. PDF.js can extract real text, but it cannot read letters from an image without OCR.

Why does one scanned PDF extract text and another scanned PDF fail?

Some scanned PDFs include a hidden OCR text layer. The page may look like an image, but the PDF may still contain invisible selectable text. Another scanned PDF may be pure image-only with no text layer at all.

Why is the extracted text sometimes messy or in the wrong order?

PDFs store positioned text instructions, not always clean paragraphs. Multi-column layouts, tables, headers, footers, and complex positioning can produce awkward extraction order or spacing.

Can it work offline?

The processing can be local after the tool is loaded, but a normal website still needs internet to load its HTML, CSS, JavaScript, and library assets. Offline use would require a special offline setup or cached assets.

Will it work on mobile devices?

It can work on modern mobile browsers, but very large PDFs may be slower on phones because the user’s own device is doing the work.

Why does local testing sometimes fail with worker errors?

PDF.js often expects to be served through a proper web server rather than opened as a local file. On WordPress, this is usually solved because the page is served through HTTP or HTTPS.

Why not use OCR for everything?

OCR solves a harder problem than plain PDF text extraction. If a PDF already contains real text, PDF.js extraction is usually faster and lighter. OCR is best used as a fallback for scanned or image-based PDFs.

Do I need both PDF.js and OCR?

Not for the first version. PDF.js alone is enough if the tool is clearly described as a PDF-to-text extractor for selectable-text PDFs. OCR can be added later for scanned PDFs and images.

Conclusion

A browser-side PDF-to-text extractor works because modern browsers can read user-selected files locally, and PDF.js can parse structured PDF content without sending the file to a remote extraction API. When a PDF contains a real text layer, this approach is elegant, fast, and inexpensive for the site owner. When the file is image-based, you leave the world of PDF parsing and enter the world of OCR.

Understanding that boundary is the key design decision behind a good public tool page. Start with PDF.js for selectable PDFs. Explain the limitation clearly. Then add OCR later if you want to support scanned PDFs and images.

Explore More from INGOAMPT

INGOAMPT creates practical explanations, free browser-based tools, and iOS apps around AI, deep learning, learning support, productivity, and useful digital workflows. The goal is to make complex technology easier to understand and easier to use.

If you are interested in more tools, articles, and apps, explore the INGOAMPT website and our iOS applications. For questions, updates, and professional contact, you can also follow or message INGOAMPT on LinkedIn.

Continue with INGOAMPT

Discover more free tools, read AI articles, explore INGOAMPT iOS apps, and connect with us on LinkedIn.

Visit INGOAMPT See INGOAMPT iOS Apps Follow INGOAMPT on LinkedIn

Sources and further reading

- Mozilla PDF.js official website

- PDF.js Getting Started

- PDF.js API documentation

- PDF.js PDFPageProxy and text content API

- PDF.js examples

- MDN FileReader documentation

- MDN Blob arrayBuffer documentation

- MDN file input documentation

- Tesseract OCR input format documentation

- Tesseract.js official website

- Hugging Face Transformers.js documentation

- Hugging Face TrOCR documentation

- WordPress shortcode documentation

- WordPress script enqueue documentation

- Google helpful content guidance

- Google noindex guidance

- Google crawlable links guidance

- Google Search Console URL Inspection tool