Mastering Hyperparameter Tuning & Neural Network Architectures: Exploring Bayesian Optimization_ Day 19

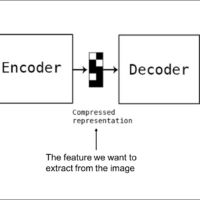

In conclusion, Bayesian optimization does not change the internal structure of the model—things like the number of layers, the activation functions, or the gradients. Instead, it focuses on external hyperparameters. These are settings that control how the model behaves during training and how it processes the data, but they are not part of the model’s architecture itself. For instance, in this code, Bayesian optimization adjusts: So, while the model’s internal structure—like layers and activations—remains unchanged, Bayesian optimization helps you choose the best external hyperparameters. This results in a better-performing model without needing to re-architect or directly modify the model’s components....