How the Brain Develops Language in Early Childhood

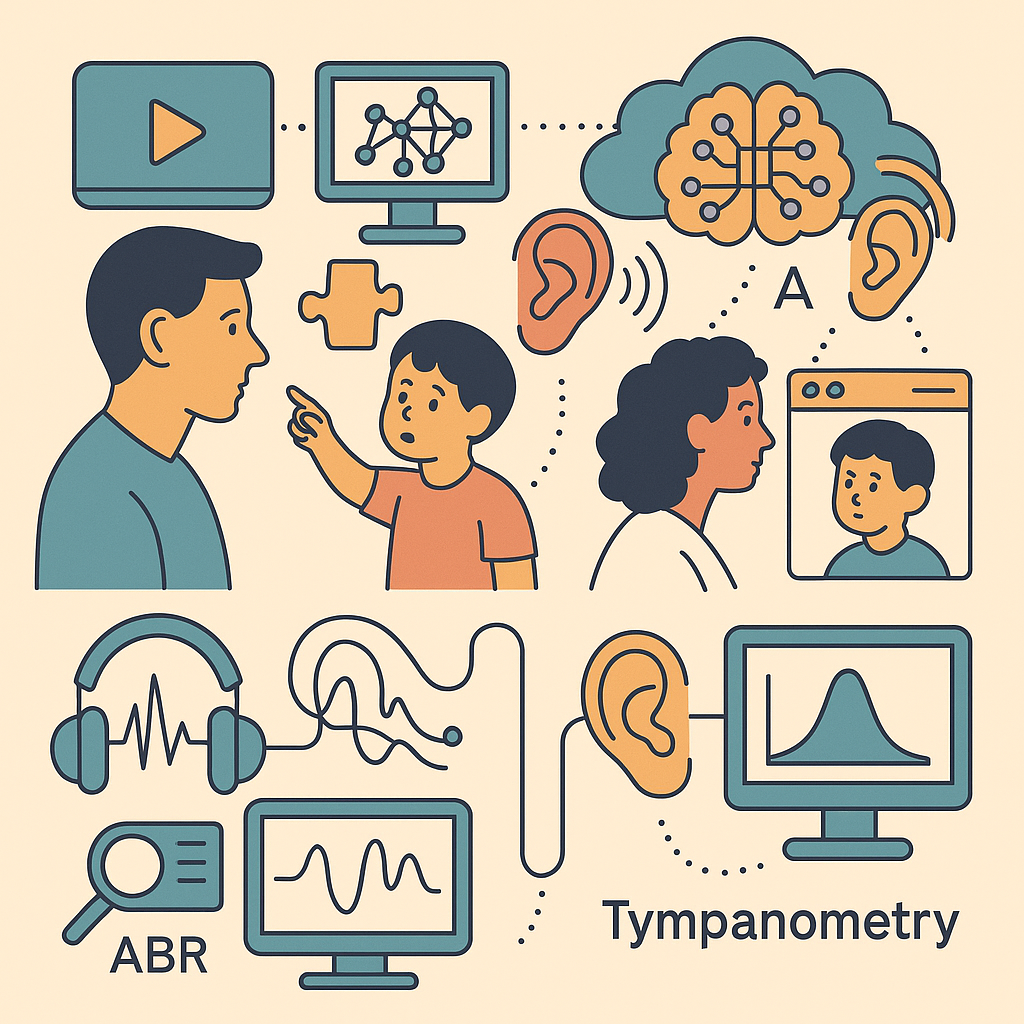

During the first few years of life, a child’s brain is rapidly forming connections that support language and communication. In fact, hearing and language networks in the brain grow explosively in the first 0–3 years, a critical “sensitive period” for learning to speak and understand words[1]. Key brain areas form a language network predominantly in the left hemisphere of the brain: – Broca’s area (in the left frontal lobe) handles speech production and articulation – helping us turn ideas into words and coordinate mouth movements[2]. – Wernicke’s area (in the left temporal lobe) is crucial for understanding language – it processes the meaning of words we hear or read[2]. – These regions are connected by neural pathways (like the arcuate fasciculus), and other areas like the angular gyrus integrate sound with images and sensations (helping us link words to concepts)[3].

For a typical toddler, these brain regions work together so the child can learn that waving and saying “hello” connects to seeing a familiar face, or that pointing at a toy and saying its name gets a positive response. By around age 3, most children can understand hundreds of words and use simple sentences, indicating that their language network is actively coordinating speech and comprehension. Healthy social interactions – like smiling, eye contact, and joint attention (sharing focus on an object, e.g. pointing at a dog so Dad also looks) – further reinforce language learning[4][5]. Joint attention is a “powerful social learning tool” for language: when a toddler points at something and an adult labels it, the child links the word to the object[6]. All of these early experiences literally shape the brain by strengthening synapses (connections) in language and social regions. In short, the young brain is wired to absorb language through social interaction – it builds a neural foundation for speech by practicing sounds and exchanging attention with caregivers.

Why Autism Can Disrupt Language and Social Communication

In children with autism spectrum disorder (ASD), this typical process of language and social development often unfolds differently. Autism is a neurodevelopmental condition that affects how the brain processes social interaction, communication, and certain cognitive tasks[7][8]. Many autistic children have delays or differences in speech and understanding language, and these challenges go hand-in-hand with difficulties in social engagement (like making eye contact, imitating others, or pointing to show interest). From a scientific perspective, autism isn’t “just” a speech delay – it involves atypical development in the brain networks responsible for both verbal language and non-verbal communication.

Research using brain scans (like functional MRI) shows that children and adults with autism often use their brain’s language areas in a different way than typically developing peers. For example, one study found that people with autism may over-rely on posterior language regions in the temporal lobe (Wernicke’s area) and show less activation in the frontal language region (Broca’s area) during language tasks[9][10]. In other words, the “language network” in autism can be out of balance, with the back of the brain doing more work and the front doing less. Additionally, the synchronization or connectivity between front and back language areas is often reduced in autism[11]. This weaker connectivity means the language network may not coordinate as smoothly, potentially making it harder for the child to integrate understanding a word (temporal lobe) with formulating a response (frontal lobe).

Besides these language-specific regions, autism also affects the “social brain” – areas that help us understand and respond to other people. For instance, skills like pointing, sharing attention, and interpreting facial expressions involve brain regions such as the superior temporal sulcus (STS) (important for perceiving eye gaze and movement) and the dorsal medial prefrontal cortex (dMPFC) (involved in social thinking and empathy)[12][13]. In autism, studies have found atypical activity in these regions during social tasks. One experiment noted that autistic individuals did not show the typical increase in STS activation when engaging in joint attention (sharing focus on an object) – in fact, they had reduced activation in STS during joint attention, and paradoxically higher activation in STS when not engaging socially[14][13]. Scientists interpret this as a possible failure of the brain to specialize for social interaction[13]. In simple terms, the autistic brain might not automatically tune in to social cues like another person’s pointing or eye gaze, which are usually the natural triggers for language learning in toddlers.

Another piece of the puzzle is the ability to imitate sounds and movements, which is crucial for learning to talk (children often learn “bye-bye” by copying adults waving and saying the word). Researchers have theorized that the mirror neuron system – brain cells that fire both when you perform an action and when you see someone else do it – may function differently in autism[15]. Mirror neurons (found in areas like the frontal cortex) normally help infants copy gestures and even babble words by “mirroring” what they observe. If this system is underactive or atypical in autism, a child might not instinctively mimic saying “hello” or waving, making it harder to practice those social words. One review suggested that early failures in the mirror neuron system could lead to a cascade of developmental impairments characteristic of autism[16][17]. This could explain why some autistic children show little to no babbling or imitation in infancy – their brains aren’t getting the usual feedback from copying others, so the language circuitry doesn’t get the same workout.

Brain Development Differences: Why Don’t Some Language and Social Areas “Light Up”?

Scientifically, autism is linked to altered brain development beginning very early in life. Brain imaging studies have revealed that some autistic infants have atypical growth patterns in the brain. For example, many babies and toddlers with ASD show faster brain growth (brain volume) in infancy, sometimes resulting in larger head sizes (a phenomenon noted in about 20% of cases)[18][19]. This early overgrowth may sound like a good thing, but it can reflect extra or disorganized neural connections. As the child grows, the brain might have an excess of short-range connections (neurons talking to immediate neighbors) but fewer long-range connections that link different brain regions[20][21]. One theory (the “under-connectivity theory”) is that the autistic brain has trouble integrating information from different areas because the long-distance wiring is inefficient[20][21]. A language example might be difficulty linking the sound of a word to its social context – the connections between auditory language areas and frontal/social areas may be weak, so the child hears “hello” but their frontal cortex doesn’t strongly cue the social response (saying hello back).

There is also evidence of functional differences in specific language-related regions in autism. As mentioned, Broca’s and Wernicke’s areas are both present and used in autism, but the “workload” is distributed differently[9][10]. Autistic children sometimes show more diffuse activation (a wider, less focused pattern of brain activity) when doing language tasks, as if their brain is trying to recruit extra help to compensate[22][23]. For instance, a study of adolescents with ASD found less lateralization – instead of the left hemisphere dominating for language, both sides of the brain were more evenly active[24][25]. This suggests the typical specialization of the left hemisphere for language may be altered, possibly slowing down efficient language processing.

Importantly, social communication deficits (like not pointing or limited eye contact) are rooted in neurological differences too. The brain regions that underlie social-emotional skills can develop unusually in autism. The amygdala (involved in processing social-emotional significance) has been found in some studies to be enlarged or functioning differently in young children with autism, which could affect how rewarding social interaction feels[26][27]. If looking at faces or sharing attention isn’t registering strongly in the brain’s reward circuit, the child may not be motivated to engage in these interactions, thus missing out on language learning opportunities that come from social play. In some cases, researchers have even observed abnormal electrical activity (“spikes”) in language-related brain areas of autistic children. For example, a subset of non-verbal or regression cases show epileptiform EEG discharges in the speech and auditory regions[28][29]. (There is a rare condition called Landau-Kleffner syndrome where epileptic activity causes a child to lose language, illustrating how disruptive abnormal spikes can be[28].) In typical autism, not all kids have these EEG spikes, but when present, such brain “noise” could further interfere with the delicate process of developing speech. Overall, autism can be seen as different wiring and firing of the brain – certain areas (like those for social language) might remain under-activated or mis-connected, which is why a child might not naturally start talking, pointing, or engaging in conversation.

Why Some Children Improve: The Brain’s Plasticity and Power of Practice

Encouragingly, the young brain is highly plastic, meaning it can change and reorganize with experience. Even if a child with autism starts out with weaker connections or less activity in certain areas, practice and intervention can strengthen those neural pathways. Many parents and therapists observe that with intensive practice – say through speech therapy, social skills games, or behavioral therapy – a non-speaking child might gradually learn to say words or use gestures. Science backs this up: studies have shown that early intervention can lead to measurable changes in autistic children’s brains. In one case, toddlers with autism received a 16-week play-based therapy (focused on social and language skills), and afterwards fMRI scans showed their brain activity in a key social region (pSTS) became more similar to non-autistic children. Children who initially had lower activation in that area developed increased activity after therapy, and those who had hyper-activation normalized to typical levels. Along with these brain changes came improvements in social interaction and language use, demonstrating the brain’s ability to rewire in response to training.

Another study targeted core language skills through a structured reading and comprehension intervention for school-aged children with ASD. After 10 weeks of practice, the children showed stronger connectivity between Broca’s and Wernicke’s areas, as if the language circuit got bolstered by the exercises[30][31]. They also started recruiting additional frontal regions to help with language tasks[32][33]. This suggests the brain was finding new ways to compensate and improve understanding. Importantly, the degree of brain change often correlates with behavioral improvement – children who made the biggest gains in reading or speaking also showed the most notable shifts in their brain activation patterns[34][35].

How does practice spark these changes on a neural level? When a child repeatedly tries a skill, such as saying “bye-bye” with a wave, neurons that fire together start to wire together. For an autistic child who doesn’t naturally imitate, a therapist might physically prompt them to wave or use picture cards to practice “hello” and “goodbye.” Initially, this is effortful because the brain’s language and motor areas aren’t strongly linked for that action. But with repetition and rewards, the connections between the auditory cue (“say hello”), the social cue (seeing someone arrive), and the motor plan (moving the lips, making sound) get reinforced. Neuroplasticity means the brain can form new synapses and strengthen existing ones at any age, especially in early childhood[36][37]. In autism, interventions are thought to harness neuroplasticity to compensate for the atypical starting point[38][39]. Over time, even a child who was largely non-verbal at age 3 may learn, for example, to use a few words to make requests, because practice has activated their frontal language areas more and built a pathway for expressive language where there was none. In fact, preliminary evidence suggests early therapy can sometimes “re-route” development so effectively that some children move off the autism spectrum in later years[40][41]. This tends to happen when intervention starts during the brain’s most malleable stages (toddlerhood and preschool), taking advantage of windows when social and language circuits are eager to grow[40][42].

It’s also worth noting that improvement is very individual. Some children respond rapidly to practice, while others progress slowly. Factors like the child’s initial developmental level, presence of co-occurring conditions (e.g. apraxia of speech, which is a motor planning disorder that makes speaking physically difficult), and even genetics can influence how much the brain changes with therapy. Nonetheless, the trend is clear: practice and structured learning can change the autistic brain. Imaging studies consistently show significant modifications in brain activation or connectivity following behavioral interventions in ASD[43][44]. The brain, in a sense, is learning how to learn – forging new routes to process language and social information. This is why some children who start out without any words may, after months or years of guided practice, suddenly begin to talk or use signs; their brain has gradually built the needed circuitry. It also explains why “if you don’t use it, you lose it”: without practice, neural pathways can weaken. Consistent engagement is key to maintaining and growing those communication skills in the brain.

Why There’s Still No “Language Pill” for Autism

If the core issue is in the brain, a reasonable question is: why not fix it with medicine? After all, we use medications for brain chemistry issues like ADHD or depression. The challenge is that autism’s language and social difficulties are not caused by a single chemical imbalance that a drug can easily adjust. They stem from complex patterns of brain wiring and development. As of today, no medication can cure or directly treat the core social and communication symptoms of autism[45]. For example, there’s no pill that instantly makes a child understand pointing or start speaking their first words, because those abilities involve learning and network synchronization in the brain, not just boosting a neurotransmitter level.

That said, medications can help with related issues. Many autistic children have co-occurring problems like hyperactivity, anxiety, or seizures, which can hinder learning. Drugs can manage some of those symptoms (e.g. reducing anxiety or preventing epileptic bursts that might disrupt the brain)[46][47]. By calming these interfering factors, medication can create better conditions for the child to focus on therapy and engagement. For instance, if a child is too hyperactive to sit through a speech therapy session, a doctor might prescribe a low dose of ADHD medication. But it’s important to understand that the medication isn’t teaching the child to talk – it’s simply addressing obstacles so that behavioral and educational interventions can work more effectively. The heavy lifting of language learning still relies on experience and practice, which is why therapies (speech therapy, occupational therapy, behavioral programs, etc.) are front and center in autism treatment.

Researchers haven’t given up on finding biomedical treatments for autism’s core traits. There have been trials of certain hormones and molecules (for example, oxytocin and vasopressin, which affect social bonding) to see if they could enhance social engagement, but so far no drug has shown consistent, large improvements in social or language skills across studies[48]. The diversity of autism plays a role here – what helps one child might not help another. Autism is a spectrum, and the underlying biology can differ from one individual to the next, making a one-size-fits-all pill elusive. Moreover, language itself is a learned behavior rooted in brain structure. Imagine trying to medicate someone into playing the piano without practice – it wouldn’t work because what’s needed is the formation of skill circuits via training. Similarly, with current knowledge, the best “medicine” for an autistic child struggling with communication is enriched learning environments, not a pharmaceutical compound. In summary, we lack a medical shortcut to build the complex neural web of language and social understanding, which is why therapy and education remain the cornerstones, and any claims of a quick cure should be viewed with skepticism.

Could AI Offer New Solutions for Communication in Autism?

Given the challenges above, researchers and clinicians are exploring new frontiers to help children with autism, and one promising avenue is artificial intelligence (AI). AI, in the form of smart software or even robots, has the potential to provide personalized and intensive training that adapts to each child’s needs. Here are a few ways AI might transform language and social interventions:

- Personalized Virtual Therapists: Imagine an AI-powered app or game that interacts with a child much like a speech therapist would – encouraging vocalization, modeling words, and responding to the child’s attempts. AI can analyze a child’s speech patterns in real time and gently prompt them to shape sounds correctly or try new words[49][50]. For nonverbal children, some AI tools can even convert gestures or pictures the child selects into spoken words through augmentative communication devices[51]. This kind of technology allows practice to happen at home every day, not just during weekly therapy sessions. Early studies show that AI-driven speech therapy apps can adjust to a child’s progress, making exercises more challenging or easier as needed, which keeps the child engaged and learning at their own pace[50].

- Social Skills Coaching with Chatbots or Robots: A child with autism might find it less intimidating to practice social greetings or emotional understanding with a friendly robot or animated avatar. AI chatbots have been developed to simulate conversations and teach empathy by guiding users on how to respond appropriately in social scenarios[52][53]. For example, an AI might role-play a peer on the playground saying “hi, want to play?”, and coach the child on recognizing emotions or replying with a proper greeting. These systems can provide consistent, patient practice without the variability of human partners. One pilot study at Stanford introduced a chatbot named “Noora” as a social coach; it helped individuals with ASD practice responding with empathy by giving immediate feedback on their answers[52][54]. While that study was in adults, similar concepts could be tailored for children (in simpler language, with more visual cues). The idea is that AI could bridge the gap when human therapists or peers aren’t available in the moment.

- AI for Early Detection and Tailored Intervention: Another exciting use of AI is in analyzing data (like videos of a child or brain scans) to flag early signs of communication trouble. Machine learning algorithms can sift through subtle behaviors – for instance, how often a baby responds to their name or points to request something – and help identify autism risk earlier than some traditional methods[55][56]. Early identification means interventions can start sooner, taking advantage of that peak brain plasticity before age 3. Beyond diagnosis, AI could assist in customizing therapy. Each autistic child has a unique profile of strengths and challenges; AI could analyze which exercises a child improves with and which they struggle with, then recommend an optimized therapy plan. Essentially, it’s like having a super-personalized coach that learns along with the child.

- Brain-Computer Interfaces and Neurofeedback: Looking further into the future, there’s intriguing research on devices that might allow children who can’t speak to communicate via brain signals. For instance, an EEG cap or other interface could detect certain patterns when the child is trying to say “hello” or indicate a need, and an AI could translate that into a synthesized voice output. While this is still experimental, it builds on successes seen in adults who use neural implants to type out words by thought. For autism, especially for those with apraxia (difficulty coordinating mouth movements), an AI-driven brain interface might bypass the oral motor barrier and give them a “voice” through a device. Additionally, neurofeedback systems (where a person tries to modulate their own brain activity with real-time feedback) might be enhanced by AI to target specific brain connections – for example, training an individual to increase activity in their Broca’s area when forming words. AI would be crucial in interpreting the complex brain signals and providing adaptive feedback.

Encouraging Mouth Movement Through Play and Modeling

Young autistic children often learn best with multi-sensory, play-based activities rather than abstract instruction. For example, oral-motor play can strengthen the muscles of the mouth and draw the child’s attention to lips and tongue. Simple games like blowing bubbles, using a straw, or chewing crunchy foods are effective. A speech therapist recommends “bubbles – blowing bubbles requires muscle tension in the cheeks and coordination of breathing” and silly-face games (e.g. making “fish lips” or exaggerated chewing) to engage lips and jaw[1]. Encourage activities that involve sucking, chewing or biting – for instance, offer safe chewy necklaces, gum or crunchy snacks – because these give strong oral sensory feedback and help regulate the child’s urge to bite or bang their head[2]. Always pair such activities with fun: let the child know a bubble blew up because they blew it, or praise them for “chomping” a carrot, to make the exercise motivating.

- Blowing and Chewing Games: Blow bubbles together, use whistles or pinwheels, and give the child safe chewable toys, crunchy veggies or gum. These target jaw and tongue muscles and provide satisfying sensory input[2][1].

- Straw and Sparkling Toys: Have the child sip drinks through a thin straw or play with chewable “silly straws.” A vibrating toothbrush or cold spoon on lips can also excite mouth awareness[3].

- Mirror Play: Sit facing the child so they see your face (or put your face at their eye level) and play mirror-games. Make goofy mouths (blow raspberries, stick out tongue, give pretend sneezes) slowly and exaggeratedly and invite them to watch or touch your lips and tongue[4]. This visual focus is key – as one expert notes, “drawing attention to your mouth, including your tongue,” and even letting the child feel your mouth movements, encourages imitation[5].

- Imitation and Music: Sing songs with simple repeated sounds and encourage actions. Rhymes like “The Wheels on the Bus” (e.g. wiggling lips or making vehicle sounds) teach how sounds relate to mouth shapes. Join in whatever noises they make (imitate their babble back) to build trust, then gently model new sounds. Studies show that children with autism often have imitative delays[6], so we let them lead and we copy them first, earning their attention.

Importantly, avoid simply pointing at your mouth or an alphabet card and demanding “say A!” – autistic toddlers may not follow a pointing cue. Instead, demonstrate the mouth movement in a playful context. For example, roll a toy toward you each time you make an “ahh” sound, so the child links the sound to a fun result. Consistency and patience are key. Praise any attempt or even eye-contact with your face. Over time, these playful activities stimulate the brain’s speech regions by pairing movement, sound, and social engagement[4][6].

Sensory Supports and Alternatives to Head-Banging

If the child tends to bang their head when frustrated, offer alternative sensory outlets. Often head-banging is a way to self-soothe or get attention. To prevent it, give the child other sensory input: let them squeeze a soft ball, jump on a small trampoline, or use a weighted blanket or vest to calm inner energy[7]. For oral sensory needs, keep something chewy or crunchy handy so they bite that instead of their hand or head. For example, a chewy necklace or chewy pencil topper can satisfy their urge for mouth input. These alternatives can redirect the behavior (rather than pointing at them for banging) and gradually teach them other ways to cope or communicate. Over time, as they grow more successful making sounds to get results, head-banging typically decreases (with any issue of pain ruled out by a doctor).

Simple AI or App Ideas for Mouth/Alphabet Practice

Technology can augment these play strategies by providing vivid visual cues and repetition in a fun format. Even a basic app could help show how to shape each sound. For example, one could develop a simple iPad app where tapping a letter plays a short animation of an open mouth pronouncing it. Apps like Speech Tutor already do this: it displays a virtual mouth forming each selected sound and gives tips for how to produce it[8]. Similarly, the Speech Sounds Visualized app uses videos and 3D animations (even x-ray clips) to show exactly where the tongue and lips go for each sound[9]. These serve as concrete visual models, turning an abstract alphabet into a moving picture of speech.

An even more engaging tool would use the device’s front camera or AR. Imagine an app that tracks the child’s face: it could overlay a cartoon tongue on their mouth and prompt them to “copy me.” For instance, the app might light up regions of the mouth (teeth, tongue) and show a smiling cartoon face making the target sound. If the child moves their mouth correctly, the app gives cheerful feedback (music, points, or a dancing character). In practice, similar technology is used in tools like VAST (Video-Assisted Speech Therapy): it even includes a “mirror mode” so kids can watch themselves making sounds and get instant visual feedback[10]. Such self-monitoring helps them fine-tune their mouth movements; as one description notes, “Using a mirror while practicing speech sounds can provide visual feedback to help improve muscle coordination”[10].

Other app approaches include video modeling: watching peers or engaging characters speak. For example, the Speech Blubs app has “kid experts” who model words and motivate children to copy them[11]. Even if our child struggles to imitate, seeing another child make a sound can attract attention. VAST’s research shows that combining animated videos with sound can effectively teach kids to focus on mouth shapes: “VAST videos have been highly effective in increasing a child’s ability to attend to a communication partner’s mouth”[12]. We could emulate this by including short clips or avatars saying “aaa,” “buh,” etc., with the visual of their articulators.

In short, a simple AI or iOS app for this purpose would: – Show clear mouth animations for each sound or letter (like Speech Tutor[8] or Speech Sounds Visualized[9]).

– Use the camera/mirror so the child sees themselves next to the model, helping bridge imitation gaps[10].

– Give immediate feedback (smiley sticker, sound effect) when the child tries a sound or opens their mouth.

– Limit clutter: focus on one sound/letter at a time, use bright colors, simple instructions (e.g. a big arrow pointing to an animated open mouth).

Such an app is not a magic cure: success varies. It might motivate some children, especially if they enjoy screens, but others may find it confusing or too “busy.” So it’s best used in short spurts and always alongside play. If the child loses interest, return to real-world games and try again later.

Some Key Takeaways and Strategy

- Encourage imitation through play (“Yes” to modeling): Use fun games that naturally show how to open the mouth and make sounds – bubbles, silly faces, songs. Even though children with ASD often don’t mimic immediately[6], these activities gently engage the same mirror-neuron circuits. Start by copying them, then slowly introduce new mouth actions for them to try.

- Demonstrate, don’t just point: Autistic toddlers may not follow a pointing gesture, so avoid just pointing at an alphabet chart or your lips. Instead, physically model the mouth shapes (and even guide their hand or chin if needed). Use visual and tactile cues – like stickers on your lips or letting them feel your tongue – to make the idea concrete[5].

- Try tech tools, but with caution: Interactive apps can provide excellent visual cues and motivation[9][8]. However, each child is different: some respond well to an animated character or peer model[11], others may tune out. If an app isn’t working (or if screen time is an issue), focus on hands-on play. Think of apps as one part of the toolbox.

- Combine approaches (“Both”): The best strategy is to blend methods. Use play and speech games at home or therapy sessions while occasionally letting the child explore educational apps. Celebrate every small success, whether it’s a sound, a mouth opening, or even paying attention to the model. Over time, this mix of fun practice and gentle instruction helps the child’s speech areas in the brain become more active.

By keeping sessions lighthearted and multi-sensory, you activate the child’s attention and brain pathways for speech. In time, even a child who wouldn’t initially imitate will begin to open their mouth for sounds – ideally replacing head-banging with curiosity about talking. In the words of a therapist: when articular cues are paired with playful engagement and visual modeling, children learn more effectively[4][12]. Consistent, joyful practice is the key.

Sources: Techniques and app ideas are drawn from speech-therapy guides and autism resources[13][5][9][11][10][12][8]. These emphasize oral-motor activities (chewing, bubbles, mirror games), as well as simple tech (animated mouths, video modeling) to support speech development in children with ASD.

[1] [2] [3] [4] [5] [13] Oral Motor Exercises for Children with Autism – Autism Parenting Magazine

[6] Teaching imitation to children with autism, by QTrobot for autism

[7] Autism & Headbanging: sensory strategies for head banging — SpecialKids.Company

[8] Our 10 Favorite Speech and Language Apps for Kids – North Shore Pediatric Therapy

https://www.nspt4kids.com/parenting/our-10-favorite-speech-and-language-apps-for-kids

[9] Speech Therapy | Speech Sounds Visualized

https://www.speechsoundsvisualized.org

[10] [12] Autism Spectrum and Apraxia | VastSpeech

[11] Speech Learning App for Kids / Speech Blubs

Sources: The information in this article is based on current scientific research in neuroscience, child development, and autism intervention[9][10][6][45], as cited throughout. These findings reflect our best understanding as of 2025, and ongoing research (including exciting AI advancements[52][51]) continues to improve the outlook for children on the autism spectrum.

[1] Why 0-3? Explore Baby Brain Science | ZERO TO THREE

https://www.zerotothree.org/why-0-3

[2] [3] Speech & Language | Memory and Aging Center

https://memory.ucsf.edu/brain-health/speech-language

[4] [5] [6] [12] [13] [14] [26] [27] Atypical brain activation patterns during a face‐to‐face joint attention game in adults with autism spectrum disorder – PMC

https://pmc.ncbi.nlm.nih.gov/articles/PMC6414206

[7] [8] [18] [19] [20] [21] [30] [31] [32] [33] [34] [35] [36] [37] [38] [39] [40] [41] [42] [43] [44] Frontiers | Rehabilitative Interventions and Brain Plasticity in Autism Spectrum Disorders: Focus on MRI-Based Studies

https://www.frontiersin.org/journals/neuroscience/articles/10.3389/fnins.2016.00139/full

[9] [10] [11] [22] [23] [24] [25] Brain Function Differences in Language Processing in Children and Adults with Autism – PMC

https://pmc.ncbi.nlm.nih.gov/articles/PMC4492467

[15] [16] [17] Imitation, mirror neurons and autism – PubMed

https://pubmed.ncbi.nlm.nih.gov/11445135

[28] [29] EEG changes associated with autistic spectrum disorders | Neuropsychiatric Electrophysiology | Full Text

https://npepjournal.biomedcentral.com/articles/10.1186/s40810-014-0001-5

[45] [46] [47] Treatment and Intervention for Autism Spectrum Disorder | Autism Spectrum Disorder (ASD) | CDC

https://www.cdc.gov/autism/treatment/index.html

[48] DEVELOPING DRUGS FOR CORE SOCIAL AND … – PubMed Central

https://pmc.ncbi.nlm.nih.gov/articles/PMC2566849

[49] [50] [51] AI Transforming Autism Speech Therapy | Personalized Support

[52] [53] [54] An AI Social Coach Is Teaching Empathy to People with Autism | Stanford HAI

https://hai.stanford.edu/news/an-ai-social-coach-is-teaching-empathy-to-people-with-autism

[55] [56] Multimodal AI for risk stratification in autism spectrum disorder – Nature